About

I am now updating this website at https://kisaacs.github.io

I am an associate professor at the University of Utah in the School of Computing and SCI Institute. I was previously an assistant professor at the University of Arizona and a member of the Humans, Data, and Computers Lab (HDC). My interests are at the intersection of data visualization and computing systems. I develop new methods of representing complex computing processes for exploration and analysis of their behavior, with applications to high performance computing, distributed computing, data science, program analysis, and optimization. I maintain a survey of such visualizations here. I was awarded an NSF CAREER grant (NSF 1844573) in 2019 and a DOE Early Career Research Program grant in 2021.

Projects & Themes

Improving Node-Link Diagrams for Graph Visualizations in Computing Systems

Graphs are prevalent in computing systems. Control flow graphs describe paths through programs. Call graphs describe relations among functions. Task graphs describe ordering of work units. Dependency graphs describe relationships among libraries. Thus, people working in computing often have need to examine these graphs. However, general graph drawing approaches often cannot scale to the needs of these workers or when they do, provide a drawing that is difficult to interpret. We are developing approaches that identify and incorporate drawing conventions and layout algorithms to address these challenges.

This work has been supported by the National Science Foundation through the Dependencies Visualization project (Grant No. NSF III-1656958) and the Systems Graph Visualization project (Grant No. NSF III-1844573) and by Lawrence Livermore National Laboratory through sub-contracts LLNS B639881 and B630670 .

CFGs:

CFGConf on Github |

CCNav - VAST 2020 |

CFGExplorer - EuroVis 2018 |

CFGExplorer on Github

Graphs in ASCII:

graphterm - TVCG 2019 |

Survey of Graph Tools used by Github Projects

General Graph Drawing:

Alternate Data Abstractions - InfoVis 2020 |

Alternate Data Abstractions Survey Data |

Stress-Plus-X - Graph Drawing 2019 |

Stress-Plus-X library

NSF Project Websites:

Systems Graph Visualization |

Visualizing Dependencies

Traveler - Node-to-Code Visualization for Performance Analysis

Performance analysis is often a highly exploratory endeavor. Visualization can help make sense of the vast quantities of data that can be collected from advanced parallel applications. However, both visual encodings and rendering latency are often hampered by the scale of data to be shown. The problem is exacerbated when multiple datasets are to be analyzed in tandem. Through work supported by the Department of Energy (DOE Early Career Research Award) and the Defense Technical Information Center, we are addressing these challenges to bring scalable, interactive, and interoperable visualizations for performance analysis.

Traveler on Github | Atria - InfoVis '19 (ArXiv)

As part of this effort, in collaboration with the Ste||ar group at LSU and the APEX project at University of Oregon, we are re-thinking (visual) performance analysis from the runtime, through the instrumentation, to the analysis and visual tools. Our work has thus previously served the Phylanx project, building a distributed array toolkit targetting several data science applications.

See also: HPX Website

Interaction in Visualization in Jupyter Notebooks

While some visual analyses are well-suited to stand-alone visualizaton systems, other visual analyses might be a relative small components of a larger endeavor. One example is a script-heavy analyses, such as those contained in Jupyter notebooks. We are exploring needs in visualization for such workflows and how the environment may affect what we consider interaction. To this end, we produced Roundtrip, a library for integrating Javascript visualization in Jupyter notebooks and passing data between those JS visualization and Python scripting cells. Roundtrip has been used for this purpose in the Hatchet performance analysis library as well as in a variant of Atria and has been supported by the Department of Energy (DOE Early Career Research Award) and the Defense Technical Information Center.

Roundtrip on Github | Hatchet on Github | Atria - InfoVis '19 (ArXiv)

Understanding How People Think About Data

A key part in any visualization project is understanding the data to be visualized. There are several factors regarding data, including the format, ranges, meaning, and form. We seek to understand how people conceptuatlize their data and how we, as visualization designers and researchers, can improve our processes of data and workflow discovery. We conducted a study to delve deeper into questions about how people think and cast their data. We identify and define latent data abstractions—data abstractions which are useful and meaningful, but not yet fully gestated. We report on our observations and produce guidance for further data discovery.

Latent Data Abstractions - InfoVis 2020 | Latent Data Abstractions Survey Data

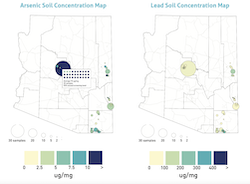

Integrating Citizen Science, Environmental, and Socio-Economic Data

Health is affected by myriad factors including one's environment and circumstances. As part of the UArizona Superfund Research Center, we are gathering data from a wide variety of sources, from government agencies to citizen scientists, to better understand how communities can build resiliencies to environmental hazards. We are integrating this dataset so it is Findable, Accessible, Interoperable, and Reusable (FAIR) while protecting sensitive data contributed by individuals. The data is being made available through a publically accessible site. We are building a visual analytics system to allow stakeholders to explore and download facets of the data.

Past Projects & Themes

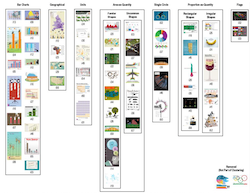

Understanding the Space of Infographics

Infographics are a widely-viewed visualization medium, used to communicate and engage the general public with data on a wide breadth of subjects. To better understand the space of infographics, we derive a classification using the groupings of people from that general public on a corpus of infographics. We found people grouped them by their primary data encoding over other criteria such as aesthetics and complexity and that there was a prevalence in area-as-quantity and proprotion-as-quantity, not typically associated with a common chart type.

Classification of Infographics - Diagram 2018

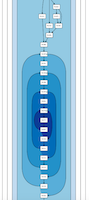

Control Flow Graphs and Dynamic Traces, with Science Up To Par (s2par): Language Agnostic Optimization of Scientific Data Analysis Codes

Parallelizing scientific and data analysis applications can require significant engineering effort. For in-development applications and frequently altered data analysis scripts especially, the time commitment is a significant barrier to the gains of parallelization. Science Up To Par applies dynamic binary analysis and specialization to automatically parallelize scientific applications in a language-independent manner. This project is in collaboration with Michelle Strout and Saumya Debray. In support of these efforts, as part of the NSF-funded Dependencies Visualization project, we are developing visual tools for analyzing control flow graphs and dynamically collected instruction traces. This material is based upon work supported by the National Science Foundation under Grant No. NSF III-1656958.

Visualizing Dependencies Project Website

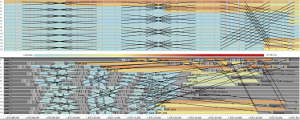

Ravel - Parallel Execution Trace Visualization

Traces are records of actions occurring during a program's execution, such as function calls and communication. They are a powerful tool in performance analysis as they can contain the detailed data necessary to reconstruct exactly what occurred. However, understanding traces is difficult due to the huge number of actions and relationships among all processes. As such, timeline visualizations are frequently employed to explore the data. Unfortunately, timelines become cluttered and difficult to interpret for even moderate numbers of processes.

Ravel focuses on increasing the scalability and utility of trace visualizations via a structure-centric approach which approximates the developers' intended organization of trace events. Visualizing this logical structure untangles communication patterns, allows aggregation in process space, and provides users with much needed context. Analyses using Ravel have led to the discovery and understanding of performance issues in several massively parallel applications.

Visualization - InfoVis '14 | Ravel on github

Logical structure (MPI) - TPDS 2016 | Logical structure (Charm++) - SC15

Boxfish - Linking Domains and Network Visualization

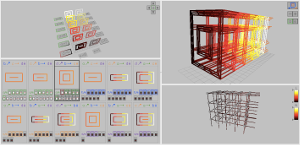

Numerous factors, such as the placement of specific tasks in the hardware, their timing data, and functions of multiple tasks, affect the performance of parallel applications. Boxfish was developed to support visualizing these relationships. Boxfish manages complex filtering of data and linking of visualizations, even when these relationships are not direct or bijective. Several network visualization modules have been developed for Boxfish, including multiple views of 3D torus/mesh networks and a 5D torus/mesh. Analysis using these visualizations in Boxfish has aided in understanding the interactions of task assignment and the underlying system network for both individual applications and the interactions of several applications on a shared system.

Boxfish on github | Boxfish poster abstract - SC12

Visualization Modules

3D torus visualization - InfoVis '12 | 5D torus visualization - VPA '14

Applications

Task mapping - SC12 | Job placement - SC13 | Task mapping, 5D torus - HiPC '14

Scalable Communication Visualization

Traditional statistical plots and graphics for performance analysis may prove ineffective because they do not show data in the most meaningful context. For one library, this meant mapping performance data to its communication overlay. The communication graph grows as the application uses increasing numbers of parallel processes. This requires a visualization that aggregates intelligently to scale with that parallism while still depicting behaviors of interest.